The true value of IoT lies in the merging of real-time data collected by connected devices with historical data, and in its ability to enable appropriate actions, according to SAS Australia’s IoT domain lead, Kevin Kalish.

At the recent SAS Analytics Insight event in Sydney, Kalish said that data collected ‘at the edge’ must be combined with cloud data to drive meaningful action if any value is to be obtained from the Internet of Things.

“Extracting value from IoT is all about generating that value from data, from historical data and from real-time data,” he said.

“IoT is also all about enabling action – real-time action, human or machine action, autonomous action, pre-emptive or reactive action.”

Kalish envisages a future where sensors will be “in every imaginable place”, from traffic monitoring sensors in roads, vehicle sensors to monitor driving behaviour, as well as in clothing and medicine.

This future smart world will “significantly change how you should approach innovation, how you connect with customers, how companies will be managed,” he said.

IoT will exponentially increase the amount of data available, and Kalish said that a number of considerations must be made about data collection and management in an IoT-equipped world.

Kalish said data quality will become an issue in IoT systems, given the conditions that connected devices are deployed in.

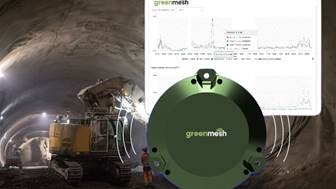

“IoT devices often operate in noisy, dynamic environments and sensors do make mistakes,” he said.

“Sometimes they lose power, lose connectivity, or require recalibration, which results in them logging incorrect data.

Kalish also said that it is the delta in collected data that is more valuable than the collection of identical data over a period of time.

“It’s the change in conditions around us that we want to capture,” he said.

He said that data quality issues must be managed in-stream to ensure that business and predictive models can be created, and appropriate actions taken.

IoT fuelling predictive modelling

Kalish said that predictive modelling is now possible with the onset of the Internet of Things, but that is predicated on the context and metadata of the devices being monitored.

“The model would need to know the context of the environment that a piece of equipment operates in, for example,” he said.

“This context is critical for the model’s ability to be predictive, and you don’t necessarily get that from the equipment sensors themselves.

“You want to enrich the sensor data with your historical and enterprise data [to provide that context].”

Kalish said that the location from which these predictive models are executed will have a significant effect on the benefits an IoT system will provide, and that a company’s business and economic models will dictate where these models should be run.

“If the decision that comes out that a given predictive model is tightly aligned with a single point in time, it can go and live out there in the field and operate independently,” he explained.

“Real-time streaming analytics will become an essential part of how organisations operate, and machine learning and deep learning systems will become integral to your business.”

IoT and data management

Kalish said that his experience with IoT deployments for SAS clients has led to his belief that data management is set to undergo dramatic change.

“Data management in the age of IoT is likely to move from ‘extract, transform and load’ to ‘stream, score and store’,” he said.

“When we talk about big data in the context of IoT, I actually think that big data is a big miss, because the focus to a degree has been – and still is – on storing the data more cheaply.

“The view of big data in IoT is that storage is a commodity, and that sometimes causes businesses to desire to become a data hoarder.”

Kalish said that the real innovation is in the cost to compute, and that true transformational change comes from the ability to harness immense compute power to ask critical questions and get critical answers at the right time.

IoT ‘fog’ rolling in

Kalish believes that cloud platforms should not be the only method used for hosting IoT systems.

“Many people believe that cloud architecture is a one-stop shop for IoT applications, but it’s not sufficient because in many use cases, sub-second decisions are required,” he explained.

“Edge architecture in the field – sometimes referred to as the ‘fog’ – is there to complement cloud architecture, forming an intermediary player between the devices and the cloud, and extending the capabilities of cloud computing down to the edge of the IoT network.”

Kalish explained that fog computing enables the execution of automated decisions and analytics closer to the data source, via processing performed on smart sensors or IoT device gateways.

Furthermore, he said that transmitting the massive amounts of sensor data back to the cloud is “simply not viable”, citing reasons such as expense, infrastructure resource requirements and slower response times.

“It’s likely that what you will send to the cloud will be aggregated data, or events data, or only the data that is critical for future analytics,” he said.