As we’ve reported previously, the Internet of Things is changing data science, and a visiting executive from analytics company SAS went into further detail into how IoT along with artificial intelligence is reshaping the industry.

Oliver Schabenberger is the executive VP of SAS’s research and development division and recently appointed CTO, and was recently in Sydney to discuss updates to the new SAS Viya analytics platform and to share his thoughts on the future of the analytics industry.

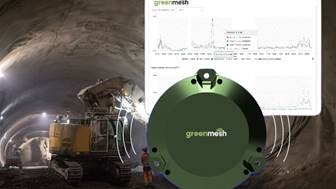

He said that IoT has led to the increasing importance of edge analytics, with data now being observed on a continuum, requiring differing techniques to be deployed depending on observation point, accuracy and speed of data movement.

“We can no longer just think about processing data in the cloud; we also have to think about event stream processing,” he added.

He also said that analytics software is quickly transitioning into the cognitive space, where sensing, listening and gesturing will become common forms of input, and reading and writing of human-like responses will become common forms of output.

“Now we expect things to adapt, to learn, to be ubiquitous, to be situationally aware, and be historically aware,” he said.

“We’re replacing processes that are very deterministic and rule-based with automated, self-learning processes.”

Two types of machine learning

Schabenberger said that traditional statistical modelling, where humans would select a model they believe would best fit the data collected, will be replaced by data-driven machine learning.

However, he believes that there are two types of machine learning, classical and modern.

“Classical machine learning is where data drives the selection of the algorithm. It allows us to use the data with different techniques, separate the data into validation, training and test data to figure out if the model we’ve trained works well with unseen data,” he said.

He added that this form of machine learning is not learning in the purest sense, but rather training a system to shape and classify data it receives.

“What really interests me is ‘modern machine learning’, where algorithms are not explicitly programmed to do anything,” he noted.

“The algorithms actually acquire skills and learn tasks when the algorithm is exposed to data.”

He said the appeal of modern machine learning is that the data programs the algorithm itself.

“It also promises automation. You can do things without deep domain knowledge, such as develop a fraud model without requiring people that have studied credit card fraud or debit card fraud, for example,” he explained.

“All you need to do is to have enough data of transactions to let an AI network learn how to classify them.”

Schabenberger said that the technology that enables this level of machine learning – neural networks – has been around for over 30 years, but has only become more significantly capable in the recent past due to two factors.

“The difference is compute power and data. Up until recently, most neural networks had at most two layers, whereas now going into the hundreds of layers,” he said.

“But even with just ten layers, you add so much depth with every layer you add, increasing the capability to abstract the process you’re trying to learn.

So we have the compute power to go deep, and we have the big data we need through concepts like IoT to train those models well.”